|

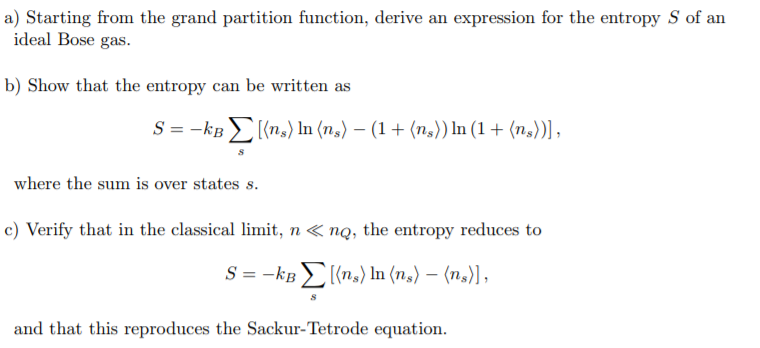

In math jargon, the product rule says that taking the derivative of a function at a point is not a homomorphism from smooth functions to real numbers: it’s a ‘ derivation’. Suppose P P is a probability distribution on the set g) If there are n n restaurants in town, you wind up eating meals in a way described by some probability distribution we’ll call Then you choose a meal according to some probability distribution Q i Q_i. First you choose a restaurant according to some probability distribution P P on the set of restaurants. Glomming together probabilitiesīut the interesting thing about this problem is that it involves an operation which I’ll call ‘glomming together’ probability distributions. So, we really do have a well-defined math puzzle, and it’s not even very hard. This is a weighted average where each surprise is weighted by its probability of happening. Where p i p_i is the probability of the i ith outcome. Then, if there are a bunch of possible outcomes distributed according to some probability distribution P P, the ‘expected’ surprise or Shannon entropy is: The idea is that your ‘surprise’ at an outcome with probability p p is defined to be − ln ( p ) - \ln(p). Now, ‘expected surprise’ sounds like an oxymoron: how can something expected be a surprise? But in this context ‘expected’ means ‘average’. Shannon entropy can be thought of as a measure of ‘expected surprise’. So, I should admit that while ‘surprise’ sounds psychological and very complicated, I’m really just using it as a cute synonym for Shannon entropy.

This still sounds like an impossibly vague puzzle. But if there were thousands of restaurants serving hundreds of dishes each, there’d be room for a lot of surprise. So if there’s only one restaurant in town and they only serve one dish, you won’t be at all surprised by your choice. Note: I’m only talking about how surprised you are by the choice itself-not about any additional surprise due to the salad being exceptionally tasty, or the cook having dropped her wedding ring into your soup, or something like that. How surprising will your choice be, on average? If you go to the i ith restaurant, you then choose a dish from the menu according to some probability distribution Q i Q_i. You randomly choose a restaurant according to a certain probability distribution P P. Suppose you live in a town with a limited number of tolerable restaurants.

But I hope you’ll see: you don’t need to know physics to understand or enjoy this stuff!

I like this, because I’ve got a bit of physics in my blood, and physicists know that the partition function is a very important concept. Then, I’ll derive it from a simpler law obeyed by something called the ‘partition function’.

I’ll explain it in a very lowbrow way, as opposed to Tom’s beautiful highbrow way. I’ll remind you of that law, or tell you about it for the first time in case you didn’t catch it yet. Tom’s idea revolves around a special law obeyed by the Shannon entropy. I’ve been trying to understand this slick formulation a bit better, and I’ve made a little progress. I don’t want to stop this conversation, but I have something long to say, which seems like a good excuse for a new blog post.Ī while back, Tom Leinster took Fadeev’s characterization of Shannon entropy and gave it a slick formulation in terms of operads. We’re having a lively conversation about different notions related to entropy, and how to understand these using category theory.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed